Incident Summary: A RecordBreaking Breach Unfolds

In June 2025, the digital world entered a new era of vulnerability. A massive breach involving more than 16 billion active credentials was discovered across several darknet marketplaces. This “megaleak” surpasses all previously known data breaches—both in sheer volume and in the freshness and diversity of the stolen data.

Unlike historical leaks that often stemmed from isolated serverside intrusions, this attack relied on a silent, distributed compromise executed on a massive scale using highly specialized malware. It reveals a deep transformation of cybercrime, where digital identity becomes a commodity, a weapon, and a tool of foreign interference.

Although the dataset is being presented as a new breach, several cybersecurity analysts have pointed out that it likely includes credentials from older leaks — such as RockYou2021 and earlier credential-stuffing compilations. This raises an important question: are we facing a new mega-leak or an inflation of existing records? Either way, the risk remains real — particularly because infostealers do not care how old a credential is, as long as the session is still valid.

Strategic Keywords: Darknet credentials 2025, global cyberattack, personal data breach, silent credential theft, infostealer logs, digital identity leak, cyber sovereignty breach

Darknet Credentials Breach 2025: A Global Digital Heist

Discover the true scope of the darknet credentials breach that shook the digital world in 2025. This unprecedented leak involved over 16 billion active identifiers and marks a dangerous shift in cybercriminal operations. From stealthy exfiltration to identity abuse and geopolitical espionage, this report unpacks the anatomy of the largest cyber credential heist ever recorded.

16+ Billion

Credentials leaked worldwide, redefining the scale and depth of modern identity theft operations.

Stealthy Exfiltration: How 16 Billion Credentials Were Stolen

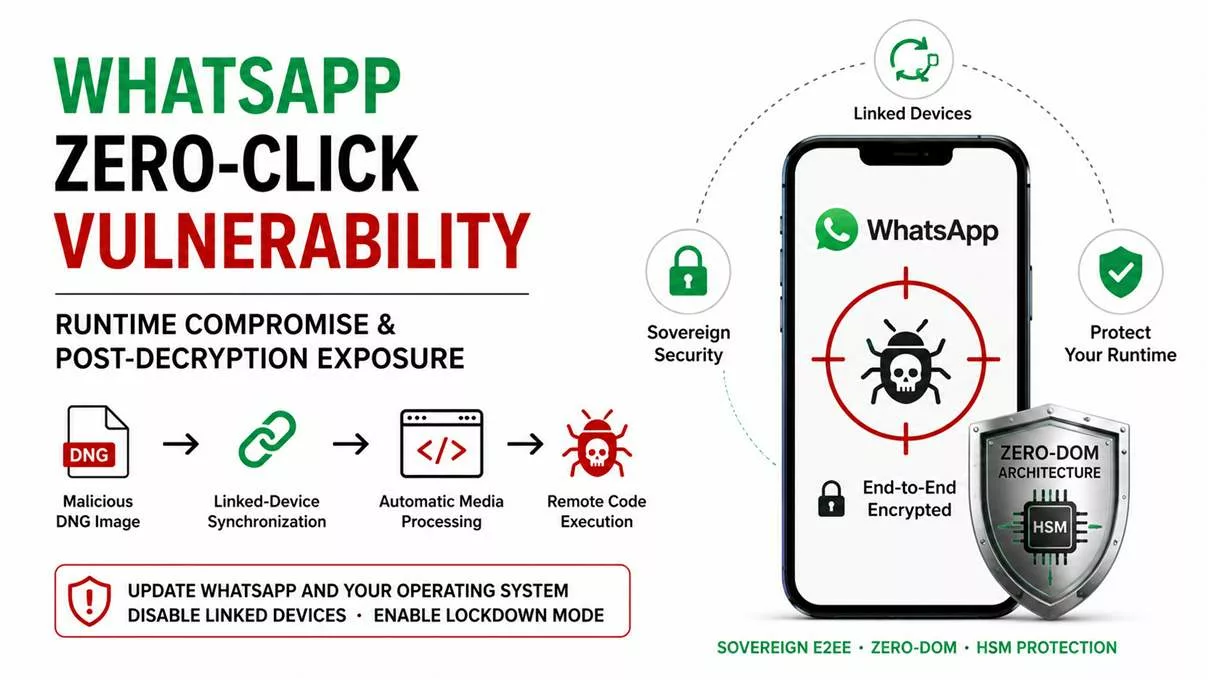

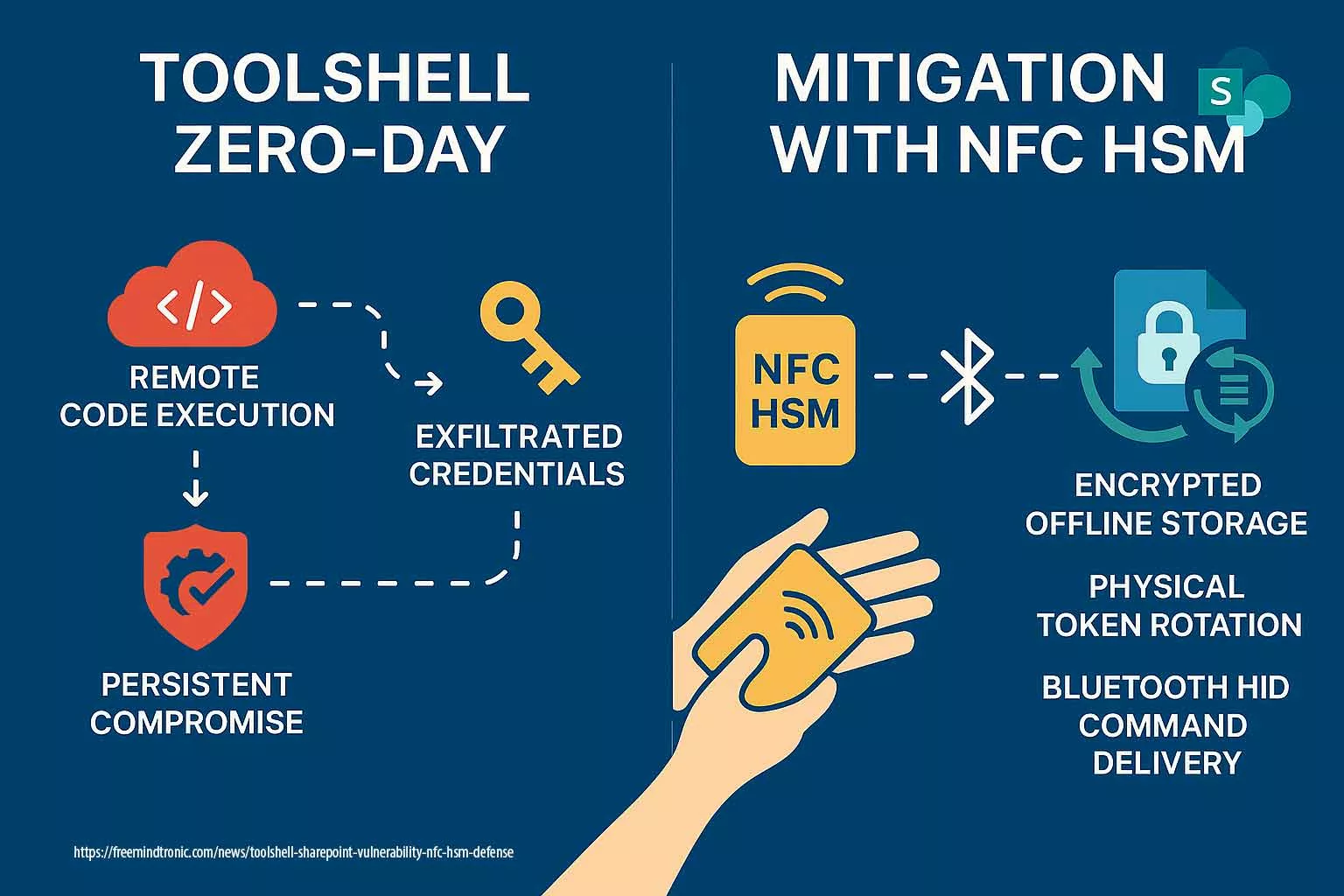

The 2025 darknet credentials breach was not a result of serverside intrusions, but of widespread clientside compromise. Sophisticated infostealer malware like LummaC2, Redline, and Titan evolved to bypass traditional antivirus tools and extract session tokens, login credentials, and encrypted vaults with surgical precision.

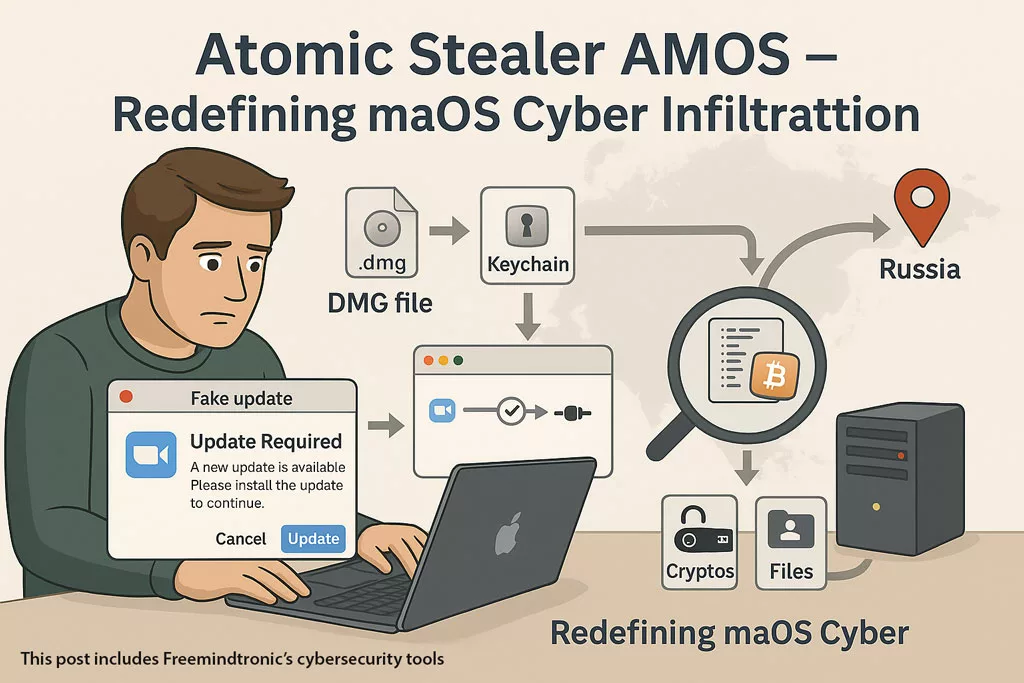

- Infostealer Payloads: Deployed via cracked software, fake browser updates, and malvertising, exfiltrating data silently to Telegram bots and private C2 servers.

- Cookie Hijacking: Session hijacks from Google, Microsoft, and GitHub accounts allowed direct impersonation—even bypassing MFA.

- Clipboard Scrapers: Targeted password managers, crypto wallets, and 2FA copypaste operations, stealing sensitive content in real time.

- Telegram Exfil Channels: Over 60% of the data was exfiltrated via Telegram bots, enabling realtime credential leaks with minimal traceability.

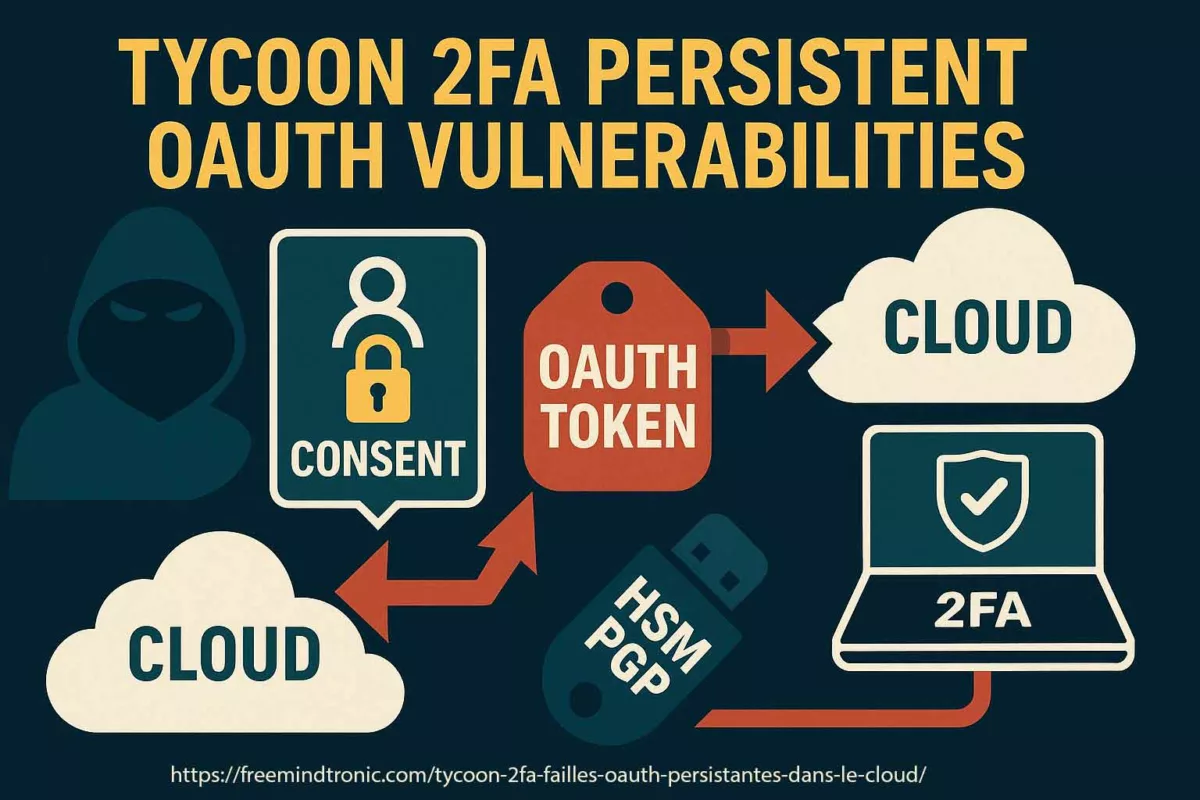

- OAuth Abuse: Attackers exploited persistent GitHub OAuth tokens to access developer tools, repositories, and secrets without triggering alerts.

- BitB Attacks: Browserinthebrowser phishing pages harvested login credentials using cloned interfaces with perfect mimicry.

Who Was Targeted in the 2025 Breach?

This breach was not random. Behind the 16 billion compromised identifiers lies a calculated selection of highvalue targets spanning continents, sectors, and platforms. A breakdown of exposed credentials shows that this was a datadriven cyber operation designed for maximum strategic disruption.

- Government Entities: Highranking emails, internal portals, and cloud credentials linked to diplomatic and intelligence operations.

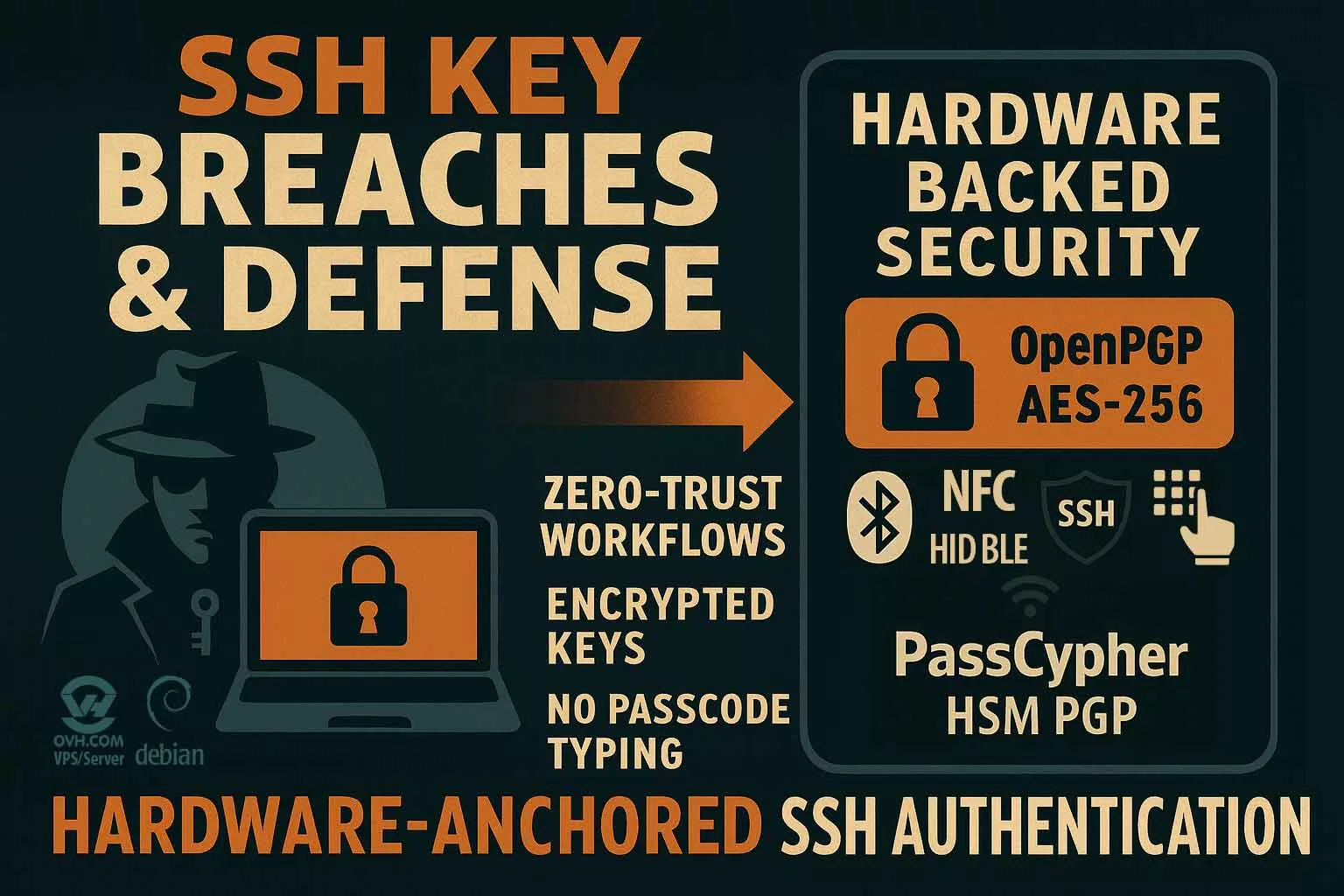

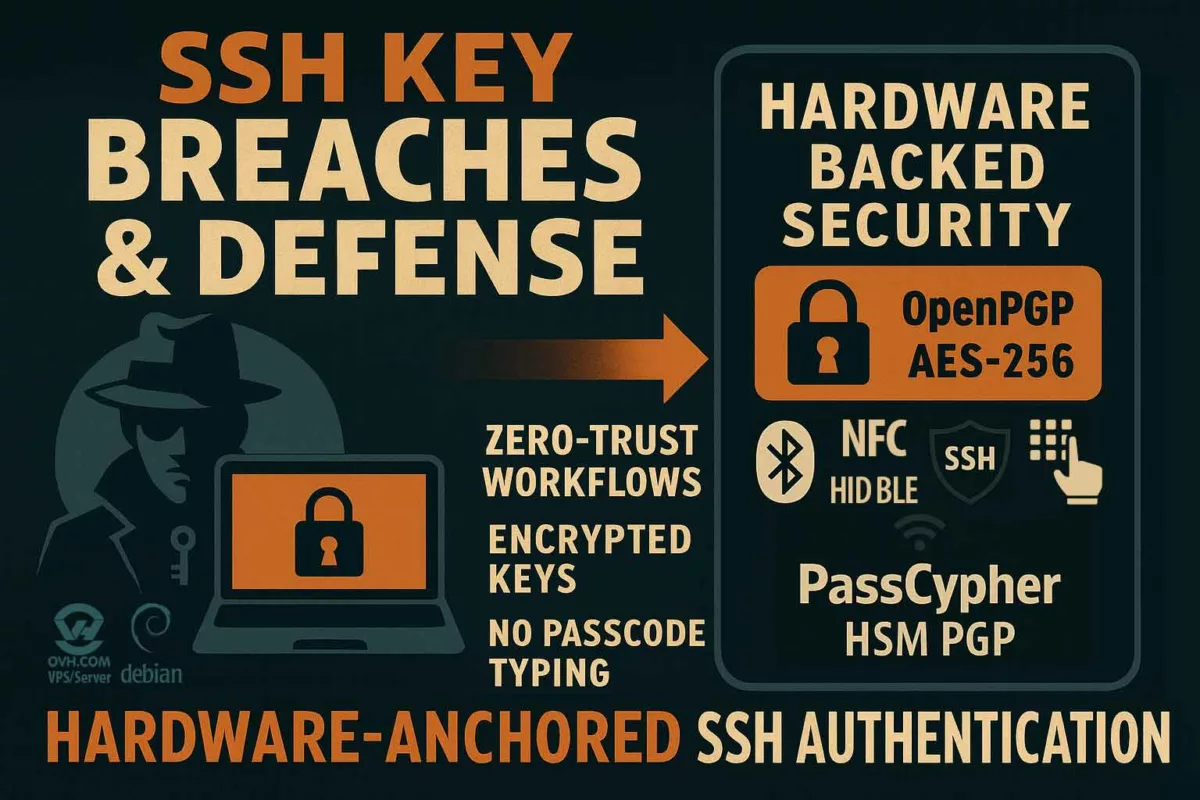

- Developers & IT Admins: Credentials linked to GitHub, SSH keys, API tokens, and internal tools—opening attack surfaces for software supply chains.

- Telecom & Infrastructure: VPN, VoIP, and backend access credentials tied to major telecom operators in Europe, the Middle East, and Asia.

- Journalists & Activists: Secure email platforms, PGP key leaks, and social media credentials exposed in authoritarian regions.

- Enterprise Credentials: Active logins to Microsoft 365, Google Workspace, Slack, and Zoom—many with elevated privileges or SSO access.

- Healthcare & Finance: EMR portals, insurance platforms, banking credentials—targeting identity validation and digital fraud channels.

Nature and Origin of Data: A New Class of Digital Assets Compromised

The 2025 megaleak is not just remarkable for its scale, but for the nature and diversity of the compromised data. Unlike past breaches mostly limited to emailpassword pairs or hashed dumps, this leak reveals dynamic, realtime identity layers

The dataset is largely composed of infostealer logs—files generated on compromised endpoints. These contain plaintext credentials, active session cookies, browser autofill data, password vault exports, crypto seed phrases, 2FA backup codes, and even system fingerprints. These logs allow immediate impersonation across services without requiring password resets or MFA tokens.

How Was the Data Acquired?

Most of the data originated from compromised personal and enterprise endpoints, harvested by malware strains such as LummaC2, Raccoon Stealer 2.3, and RedLine. These infostealers are capable of exfiltrating full identity profiles from infected machines in seconds, often without triggering detection systems.

They exploit weak security hygiene such as:

- No hardwarebacked vault protection

- Poor browser security settings Reuse of weak passwords

- Unsafe software downloads (cracks, warez, fake updates)

What Type of Data Was Leaked?

- Plaintext Logins: Emails and passwords for thousands of platforms (Microsoft, Apple, Google, Facebook, TikTok, etc.)

- Session Tokens: Cookies and JWTs enabling instant login without passwords or MFA

- Vault Extracts: Exfiltrated files from KeePass, Bitwarden, 1Password, and Chromiumbased password managers

- Crypto Wallet Seeds: Recovery phrases, keystore files, and hotwallet tokens (MetaMask, Phantom, TrustWallet)

- Browser & Device Fingerprints: IP, location, hardware specs, OS info, browser versions, and language preferences

In response, PassCypher NFC HSM and HSM PGP secure authentication by storing cryptographic keys in tamper-proof hardware that no remote attacker — not even an AI-powered one — can forge, duplicate, or intercept.

Key Sources of Infection

The compromised data points to a global spread of malware through:

- Pirated software and cracked installers

- Fake browser updates or Flash installers

- Email phishing attachments

- Malvertising (malicious ad networks)

- Discord, Telegram, and gaming communities

These infection chains reveal how attackers <strong>exploited trust ecosystems<strong>, disguising malicious payloads within platforms frequented by developers, gamers, and crypto users.

Exfiltration Methods: Silent, Distributed, and Highly Scalable

The exfiltration of over 16 billion credentials in 2025 wasn’t just massive—it was surgically precise. Threat actors orchestrated a globalscale theft using modular infostealers and encrypted communication layers. These methods enabled realtime credential leakage with minimal detection risk.

CommandandControl Channels: Telegram, Discord, and Beyond

The majority of logs were exfiltrated via Telegram bots configured to autoforward stolen data to private channels. These bots used tokenbased authentication and selfdeletion mechanisms, making traditional monitoring tools ineffective.

“`html

Strategic Insight: Over 60% of the logs recovered from darknet forums showed clear Telegramorigin metadata, pointing to widescale use of bot automation.

Discord also played a role, especially in targeting gaming communities and developers. Malicious bots embedded in servers silently captured credentials and pushed them via WebHooks to remote dashboards.

Malware Stealth Techniques: Evasion and Persistence

Infostealers like LummaC2, Redline, and Raccoon 2.3 embedded stealth modules to:

- Disable Windows Defender and bypass AMSI

- Inject payloads into trusted processes (svchost, explorer.exe)

- Encrypt stolen data with custom XOR+Base64 algorithms before exfiltration

The malware lifecycle was shortlived but potent: designed for a singleuse log theft, then selfdeletion. This limited forensics and delayed incident response.

PhishingFree Exfiltration via Fake Updaters

No need for phishing emails. Attackers embedded payloads into fake installers for browsers, media players, and antivirus tools. These were promoted via:

- Malvertising on adult sites and torrent platforms

- SEO poisoning leading users to fake clone sites

- “Browser Update Required” overlays triggering malicious downloads

- Payload Delivery Methods

Cracked software (often bundled with malware via forums and Telegram groups)

Fake installers mimicking Chrome, Brave, and Firefox updates

Weaponized PDFs and Office macros triggering driveby downloads

⚠️ Operational Note: Logs were often exfiltrated to C2 servers registered in rare TLDs (.lol, .cyou, .top), making IP reputationbased blocking inefficient.

Browser Hijacks and AutoFill Abuse

Once inside a system, malware extracted:

- Session tokens from browser cookies (bypassing login screens)

- Autofill form data (names, addresses, phone numbers, card info)

- Saved credentials from Chromium vaults and localStorage APIs

Some payloads injected JavaScript into active browser sessions, capturing credentials before submission, making even secure pages vulnerable.

Victim Profiles: From Diplomats to Developers

This massive breach wasn’t indiscriminate. On the contrary, the leaked credentials reflect a deliberate and **strategic targeting** of users and organizations with highvalue access points. The 16+ billion identifiers mapped out a digital battlefield across continents and sectors.

Governments and Public Institutions

Hundreds of thousands of credentials were traced back to:

- Diplomatic corps and foreign ministry portals

- Intelligencelinked accounts using Microsoft 365 or ProtonMail

- Sensitive platforms used by EU, Gulf, and ASEAN governments

“`html

Strategic Insight: These accounts allowed impersonation at the highest diplomatic levels—without needing to break into state servers.

Developers and System Administrators

Exposed data includes:

- SSH keys, GitHub OAuth tokens, Jenkins login sessions

- Access to devops pipelines, CI/CD dashboards, and production vaults

- API secrets connected to Amazon AWS, Azure, and Google Cloud projects

- These credentials are a launchpad for software supply chain attacks—allowing infiltration far beyond the initial victim.

Enterprises and Cloud SaaS Platforms

Stolen enterprise credentials gave direct access to:

- Microsoft 365 and Google Workspace sessions (many with SSO)

- Zoom, Slack, Atlassian, Salesforce logins

- Admin panels of ecommerce and banking apps

The breach also included access to customer support dashboards, exposing sensitive user communications and KYC documents.

Telecom and Infrastructure Providers

- VPN endpoints and NOC portals in Europe and the Middle East

- Privileged logins to VoIP, fiber provisioning, and 5G orchestration tools

- Backend access to telecom SaaS used by ISPs and mobile operators

Journalists, Activists, and NGOs

Targeted individuals operating in:

- Authoritarian or hybrid regimes (Russia, Iran, China, Belarus, Myanmar)

- Platforms like ProtonMail, Signal, Tutanota, and Mastodon

- Credentials enabling the takeover of anonymous social channels

Healthcare and Financial Systems

- Active sessions to EMR systems, health insurance databases

- Leaked IBANs, SWIFT codes, crypto wallet access

- Identity validation bypasses for fintech services (Stripe, Revolut, Wise)

⚠️ Operational Note: Many stolen credentials had not expired at the time of discovery, allowing active impersonation months after the initial leak.

Up Next: The Cybercrime Ecosystem Monetizing Your Identity

Next, we explore how these stolen credentials are traded, resold, and automated on darknet platforms, turning each login into a revenuegenerating asset for cybercriminals across the globe.

Who Got Hit the Hardest?

By Victim Category (Estimates from 16B credentials sample):

| Victim Category | Share (%) |

|---|---|

| Enterprise SaaS & Cloud Accounts | 32% |

| Developers & IT Admins | 21% |

| Government & Public Sector | 14% |

| Finance & Insurance Platforms | 11% |

| Telecom & Infrastructure | 8% |

| Healthcare Systems | 7% |

| Journalists, Activists & NGOs | 4% |

| Other Personal Accounts | 3% |

By Region (Top 5):

| Region | Share (%) |

|---|---|

| United States | 24% |

| European Union (incl. France, Germany, Italy) | 19% |

| India & Southeast Asia | 15% |

| Middle East (incl. UAE, Israel, KSA) | 13% |

| Russia & Ex-Soviet States | 11% |

Additional Insights: The Scale and Velocity of Credential Leaks

- Infostealer data surge (2024): According to Bitsight and SpyCloud, the volume of logs containing cookies, session tokens, and browser data rose by +34% in underground forums.

- Credential saturation per victim: SpyCloud reports that the average victim had 146 compromised records, spanning multiple platforms—highlighting widespread account reuse and poor credential hygiene.

- Rapid session hijacking: As reported by The Hacker News, 44% of logs now include active Microsoft sessions, with exfiltration typically occurring via Telegram within 24 hours.

💡 These trends reveal how credentials aren’t just stolen—they’re weaponized with growing speed, making the use of reactive defenses increasingly obsolete.

With stolen identities fueling everything from financial fraud to state-sponsored digital espionage, the black market has evolved into a strategic reservoir for hostile influence campaigns. This industrialization of cybercrime now forms the backbone of digital proxy wars — where attribution is murky, and plausible deniability is a feature, not a flaw.

Insight: Targets were not random. The strategic nature of the breach reveals cyber operations tailored to economic influence, software supply chain disruption, and geopolitical destabilization.

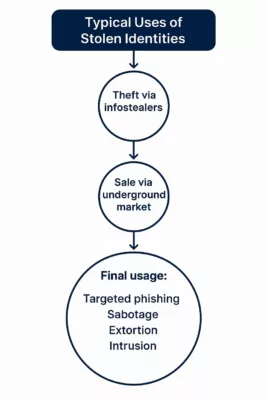

Underground Market: The New Gold Rush for Stolen Identities

The massive leak of over 16 billion credentials in 2025 didn’t just disappear into the void—it was monetized, shared, and resold across an increasingly organized underground ecosystem. From Telegram channels to dedicated marketplaces, cybercriminals have professionalized the distribution and monetization of stolen digital identities.

The leaked credentials are not merely dumped for notoriety—they’re sold in targeted bundles by region, sector, or platform, often using subscription-based models. These black-market credentials fuel account takeovers, business email compromises, and deepfake-enabled impersonations.

Key Monetization Channels:

- Telegram bot markets: Instant purchase of fresh logs and access tokens, often automated with search-by-email features.

- Genesis-style marketplaces: Offer full digital fingerprints, session cookies, and device emulations.

- Infostealer-as-a-Service (IaaS): Subscription models where cybercriminals access ready-to-use infection logs in real time.

- Darkweb credential catalogs: Indexed credential collections searchable by domain, country, or company.

Infographic: The black-market ecosystem for stolen digital identities in 2025. From Telegram bots to infostealer-as-a-service (IaaS), this economy fuels cybercrime and espionage.

💡 Strategic Insight: The value of an identity is no longer just tied to username-password pairs. Full access packages with session tokens, fingerprinting data, and behavioral metadata now fetch higher prices and enable stealthier attacks.

Sample Prices (June 2025):

| Item Type | Avg. Price (USD) |

|---|---|

| Gmail account with session cookie | $4.50 |

| Google Workspace admin access | $35–$200 |

| Crypto wallet seed phrase | $20–$500 |

| Full identity kit (passport scan + credentials) | $25–$100 |

| Access to developer tools (GitHub, Jira, etc.) | $8–$60 |

As these stolen credentials are traded and weaponized, their geopolitical consequences begin to surface—especially when the targets include critical sectors and foreign governments.

Credential Pricing Tiers

- Basic Logins: $1–$5 for email/password combos

- Session Cookies: $10–$50 depending on freshness and service

- Enterprise Access: $100–$500+ (especially SSO-enabled)

- Crypto Wallet Seeds: $200–$1,000+ depending on balance

- Developer Tokens & API Keys: $50–$300 depending on scope

Vendors often offer guarantees like “valid login or refund” and accept payments via Monero or USDT.

Market Share of Credential Types (2025)

🔹 35% Session Tokens

🔹 40% Email/Password Combos

🔹 25% Vault & Crypto Credentials

Strategic Insight:

Darknet platforms now operate like ecommerce sites, with search filters by region, platform, and even employer. The industrialization of cybercrime is no longer hypothetical — it’s fully operational.

These marketplaces don’t just sell access — they empower strategic sabotage. In the next section, we examine how hostile states and actors exploited this trove for cyber espionage and digital manipulation.

Geopolitical Exploitation: Cybercrime as a Proxy Tool

Behind the massive leak of over 16 billion credentials in mid-2025 lies more than just a financial motivation — it reveals a darker, more strategic exploitation of stolen identities for geopolitical influence and cyberespionage.

By classifying the data by language, region, platform, and collection date, malicious actors — including nation-state groups — have been able to build curated databases for targeted disinformation campaigns, surveillance, and infiltration of sensitive networks.

These activities blur the line between traditional cybercrime and state-sponsored operations. Initial Access Brokers (IABs), often the first sellers of stolen credentials, may unknowingly serve the interests of geopolitical actors looking for covert entry points into rival nations’ digital infrastructures.

Examples of geopolitical misuse include:

- Hijacking Telegram or WhatsApp groups to spread targeted disinformation during elections;

- Abusing access to GitHub, Notion, or internal platforms to steal trade secrets or diplomatic communications;

- Using compromised LinkedIn accounts to plant narratives, gain trust, or engineer influence within private or public organizations.

With stolen identities fueling everything from financial fraud to state-sponsored digital espionage, the black market has evolved into a strategic reservoir for hostile influence campaigns. This industrialization of cybercrime now forms the backbone of digital proxy wars — where attribution is murky, and plausible deniability is a feature, not a flaw.

These operations rely on the stealth and realism that infostealer data provides. Stolen credentials offer more than access — they offer credible digital identities. This transforms a simple malware victim into a proxy agent of influence.

💡 Strategic Insight

Cybercriminals aligned with geopolitical interests no longer need direct access to weaponized exploits. Instead, credential access allows infiltration with plausible deniability, turning stolen identities into digital mercenaries.

Through this lens, the 2025 mega-leak is not just a cybercrime event — it is a cyber-diplomatic weapon, affecting the very foundations of trust, identity, and sovereignty in cyberspace.

Next: Who is really behind the 2025 credential breach? The next section investigates how behaviorally tailored data sets give adversaries the ability to impersonate, influence, and infiltrate with near-perfect fidelity.

Threat Actor Attribution: Who Engineered the 2025 Mega-Leak?

The forensic evidence left behind by this massive credential breach paints a fragmented picture—but not an anonymous one. While attribution remains inherently complex in cyber operations, several indicators suggest the involvement of well-resourced actors, possibly operating under the protection—or direction—of nation-states.

Malware Signatures and TTPs (Tactics, Techniques, Procedures) identified in the breach align with malware families historically associated with Eastern European cybercriminal ecosystems. The use of Telegram bots, GitHub token abuse, and advanced session hijacking are all markers of actor groups linked to data monetization and hybrid influence operations.

In addition, several C2 domains and payload hashes trace back to infrastructure previously tied to the cybercriminal collective “DC804“, an advanced threat group believed to have links with actors operating from Ukraine and surrounding regions.

💡 Strategic Insight Attribution in cyberspace often relies on patterns, not confessions. In this case, the tooling, language settings, C2 server timings, and monetization channels suggest a fusion of cybercriminal profit motives and geopolitical disruption strategies.

Indicators of Nation-State Involvement

The operational scale of the breach—and its remarkably coordinated exfiltration tactics—raise suspicion that the attackers benefited from infrastructure support, safe havens, or even passive cooperation from government-aligned groups. This includes:

- Regional Target Bias: A disproportionate volume of credentials came from NATO countries and Asian democracies, while data from certain Eastern bloc regions appears underrepresented.

- Language Fingerprints: Several payloads and admin panels were configured in Russian and Ukrainian locales, with Cyrillic-based filename conventions.

- Operational Times: Attack traffic patterns followed Central European and Moscow Time business hours—suggesting actors worked standard office shifts, not criminal ad hoc hours.

- Tool Reuse: Obfuscation layers reused from malware previously attributed to Sandworm and Gamaredon, suggesting potential crossover or tooling leaks.

Attribution Caveat: While these clues are strong, none alone constitute irrefutable proof. The breach may result from a hybrid operation blending financially motivated hackers with state-level beneficiaries or disinformation agendas.

Understanding the threat actors is crucial not just for retaliation, but for anticipating their next moves. The final section delivers actionable insights to help organizations strengthen their cyber posture.

Digital Forensics and Open-Source Intelligence (OSINT)

Independent analysts and cybersecurity firms noted that much of the leaked data first surfaced on Telegram channels used by known ransomware groups. Certain accounts had ties to earlier leaks like “RockYou2024” and “Mother of All Breaches“, indicating an ecosystem where access brokers share, trade, and repurpose stolen credentials.

The GitHub OAuth token abuse, for example, mirrors patterns seen during the SolarWinds follow-on attacks, though no direct link has been established.

Attribution Synthesis:

Behind every leaked credential may lie a chain of actors — from low-level brokers to geopolitical operatives. Understanding this chain is crucial to defend not just individual identities, but the sovereignty of institutions and nations. The final section delivers actionable strategies to mitigate these evolving threats and protect digital assets.

From Espionage to Counter-Espionage: Shifting the Power Balance

With the underground market thriving and nation-states exploiting identity data at scale, the only remaining question is: how can individuals and organizations fight back? In the next section, we explore advanced countermeasures — including hardware-based encryption tools like PassCypher HSM PGP and DataShielder NFC HSM — that offer a radically new approach to protecting digital identity, even when credentials are compromised.

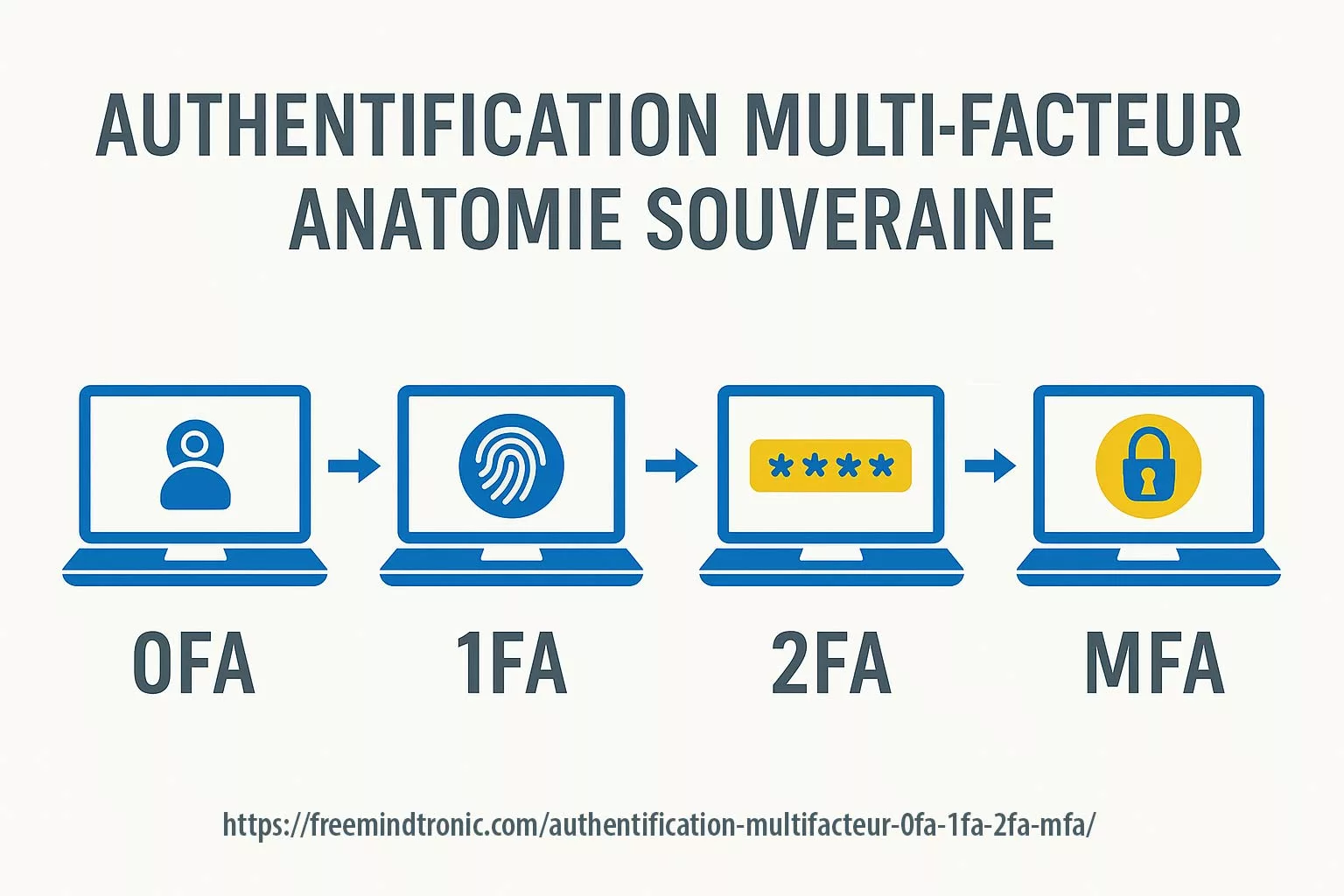

In the wake of the 2025 mega-leak, traditional cybersecurity hygiene practices — like rotating passwords or enabling 2FA — have proven insufficient against the industrialization of credential theft. Cybercriminals no longer need your password. They buy your session.

From Reactive Defense to Proactive Immunity

Infostealers now bypass 2FA by exfiltrating session cookies and device fingerprints, which are then sold in blackmarket ecosystems that emulate your digital identity in real time. The only viable defense lies outside the operating system, in tamper-proof hardware-based authentication.

What Should You Do After the Darknet Credentials Breach?

In response to this unprecedented leak, cybersecurity experts recommend a series of critical actions:

- Immediately change your passwords, especially for email, banking, and social media accounts.

- Enable Two-Factor Authentication (2FA) on all services that support it.

- Check if your email or credentials have been exposed using services like HaveIBeenPwned.

- Use a password manager to generate and store unique, strong passwords for each service.

- Consider switching to Passkeys (FIDO/WebAuthn) for better phishing resistance — though these are not immune to session hijacking.

While these measures are helpful, they remain inherently software-based. Once a device is compromised by an infostealer, even 2FA and passkeys may not be enough.

Ready to reclaim control over your identity?

Discover how PassCypher NFC HSM and PassCypher HSM PGP help you defeat infostealers, session hijacks, and phishing — even when your device is compromised. Offline. Tamper-proof. And yours alone.

PassCypher: The Offline Hardware Identity Shield That Outclasses All Digital Authentication Systems

From password managers to biometric logins and FIDO2 passkeys, most digital authentication systems — even those marketed as “passwordless” — still rely on your operating system, browser, or cloud. This reliance creates an invisible attack surface — always present, and always exploitable.

PassCypher removes the need for trust in software or connected devices altogether. It’s not just another password replacement — it’s a paradigm shift in identity sovereignty.

Developed by Freemindtronic Andorra, the PassCypher suite — combining NFC HSM and HSM PGP — delivers a new security model that goes beyond password managers, passkeys, biometrics, or FIDO tokens.

Unlike traditional solutions, PassCypher never stores secrets on your phone, browser, cloud, or system memory. No master password. No trusted device. No syncing.

Only physical presence and cryptographic segmentation grant access — making phishing, malware, session hijacking, and deepfake impersonation technically impossible.

Passkeys vs PassCypher – When Zero Trust Becomes Zero Exposure

Beyond Trust: A security model where secrets are never exposed — not even after a breach.

What Top Experts Say About Passkeys — and What They Can’t Prevent

Despite their cryptographic rigor, passkeys still depend on trust in the local execution environment. As shown in Trail of Bits’ 2025 analysis and their 2023 investigation, authenticators embedded in browsers or OS-managed enclaves remain exposed to local code injection or manipulation.

- 🕷️ Browser-based malware can trick users into authenticating malicious domains.

- 💥 Counterfeit authenticators may leak private keys if firmware is compromised.

- 🎯 Recovery mechanisms in cloud-based passkey backups widen the attack surface.

PassCypher eliminates all these risks by removing browsers, operating systems, and the cloud from the authentication equation entirely. It stores segmented AES-256 keys in offline, air-gapped tamper-proof hardware. No shared memory. No fallback logic. Nothing exposed to runtime attacks. Not even trust in the hardware manufacturer is required — because the secrets never leave the NFC HSM or HSM PGP container.

🔐 While passkeys resist phishing, PassCypher makes it technically impossible by eliminating every single exposure vector — including those acknowledged by the FIDO/WebAuthn technical literature.

📌 As Trail of Bits concludes, “Passkeys are not silver bullets.” That’s why PassCypher exists.

Digital Authentication vs PassCypher: What Really Keeps You Safe?

Passkeys (FIDO2/WebAuthn) replace passwords with cryptographic key pairs. This reduces phishing attacks but does not eliminate malware threats. In most deployments, the private key is stored inside the OS or a browser-managed enclave — potentially accessible by advanced malware, as highlighted by Trail of Bits (2025).

In addition, studies such as Specops (2024) and MDPI (2023) emphasize the vulnerabilities of passkeys in case of local malware, session hijacking, or cloud sync compromise.

PassCypher takes a radically different approach: keys are generated and stored entirely offline, in a tamper-proof, air-gapped NFC HSM or encrypted local container (PGP). The secret never appears in memory, isn’t accessible by any process, and remains invisible — even to an infected system.

| Feature | PassCypher HSM PGP (Browser Plugin) | PassCypher NFC HSM (Lite or Master) |

|---|---|---|

| Storage | AES-256 encrypted local vault | Hardware-encrypted memory (AES-256 + segmented key) |

| Session Protection | Browser sandboxing & anti-BITB | Offline key access via secure NFC or QR scan |

| Phishing Defense | Domain & URL validation | No online input or login required |

| Compromise Immunity | Immune to clipboard/infostealer malware | OS-isolated, no USB interface |

| Integration | Webmail, Web login, PGP support | Android NFC + Freemindtronic app |

Takeaway: Unlike passkeys and other passwordless systems, PassCypher doesn’t just improve convenience — it physically separates secrets from any exploitable digital environment. Whether browser plugin (PGP) or NFC hardware module, the data remains encrypted, segmented, and unreachable — even by advanced malware or AI-powered impersonators.

Structural Immunity: Up to 97% of Credential Attack Vectors Neutralized

According to public breach analyses and malware telemetry, over 95% of identity-based cyberattacks exploit a narrow set of vectors: phishing (including BITB), session hijacking, OS-level malware, token reuse, and cloud-synced credential leaks.

PassCypher neutralizes these threats by architectural design. Instead of patching surface-level symptoms, it eliminates structural exposure entirely:

- 🔐 AES-256 CBC segmented keys — never stored in RAM, browser memory, or synced to the cloud

- 📴 Offline-by-default storage — in local encrypted vaults (HSM PGP) or air-gapped NFC hardware (NFC HSM)

- 📲 Activated only by physical presence — via secure NFC scan or QR code, no trusted device dependency

🧩 PassCypher isn’t just for usernames and passwords. It safeguards:

- 🗝️ SSH private keys with passphrases

- 🔑 TOTP/HOTP secrets with auto-submitted PINs

- 📦 PGP signing and encryption keys

- 🧱 Full-disk encryption keys (BitLocker, VeraCrypt, TrueCrypt)

Multiple independent studies — from

Trail of Bits, Specops, and MDPI — confirm that offline, hardware-rooted and segmented identity models can prevent up to 97% of credential exploitation paths, far beyond the 50–60% blocked by cloud-dependent passkey systems.

This isn’t just breach mitigation — it’s breach immunity. Even advanced AI-powered impersonation or deepfake-based attacks can’t decrypt what’s never exposed. With PassCypher, identity protection becomes a matter of physics, not policy.

🛡️ Active BITB Protection — Defusing a Common Entry Point in Credential Breaches

One of the most exploited attack vectors behind large-scale credential leaks — such as the 2025 Darknet dump of over 16 billion valid identities — is the Browser-in-the-Browser (BITB) phishing technique. It creates fake login popups that are visually identical to real providers (Google, Microsoft, etc.), tricking users into entering valid credentials or initiating trusted sessions.

PassCypher HSM PGP goes beyond simple login isolation. Its embedded BITB defense mechanism automatically destroys iframe-based redirections and, in semi-automatic mode, flags suspicious redirect URLs before they reach the user’s screen — even after authentication. This makes it a rare solution capable of disrupting phishing operations even after login has occurred.

In a world where deepfakes and session hijacks are automated, real-time sanitization of the browser environment isn’t a luxury — it’s a necessity.

📚 Want to See PassCypher in Action?

Curious about how PassCypher actually works? These in-depth guides walk you through the full architecture, usage, and security model:

- How PassCypher HSM PGP Works – Full Tutorial

- PassCypher NFC HSM – Secure, Convenient Hardware Password Manager

Learn how air-gapped key storage, NFC hardware, and PGP plugins create a tamper-proof authentication flow — even on compromised devices.

Security Without Exposure — Not Even After Intrusion

Secrets remain continuously encrypted using AES-256 CBC with segmented keys. No software, hardware, or network-level incident can expose them — because decryption requires multiple simultaneous trust conditions: native 2FA, origin validation, and active anti-BITB protection.

This isn’t reactive security through erasure. It’s proactive immunity through structural inaccessibility — enforced at every single access attempt.Deepfake-Proof Identity: Why Hardware Authentication Is Immune to AI Impersonation

As AI-generated deepfakes evolve to mimic voices, faces, and even behavioral biometrics, traditional identity verification methods — including facial recognition, fingerprint scans, and voice authentication — are becoming dangerously unreliable. Identity is no longer about who you are. It’s about what you control offline.

AI Can Fake You — But Not Your NFC HSM

Today, attackers can execute biometric spoofing attacks using just a smartphone and generative AI tools.

In contrast, PassCypher NFC HSM and PassCypher HSM PGP store secure hardware keys that no remote attacker — not even one powered by AI — can forge, duplicate, or intercept.

Segmentation: The Ultimate Trust Factor

The PassCypher suite introduces segmented key authentication, meaning your identity is only accessible if you physically possess a specific hardware module and successfully authenticate locally via PIN, ID Phone, or a combination. No AI can simulate this chain of trust.

Zero Biometrics, Zero Risk

- No facial data stored or processed

- No fingerprint scans to forge or replay

- No voiceprint to capture or spoof

- Only encrypted secrets stored offline and validated via segmented trust

Hardware Beats AI

When authentication relies on possession, segmentation, and local control, AI impersonation becomes irrelevant. PassCypher doesn’t care what you look or sound like. It only reacts to what you hold — and what you’ve physically secured.

This model ensures that no biometric, behavioral, or system-level data can be faked, phished, or leaked. It’s a trustless-by-design authentication that doesn’t rely on third parties, devices, or assumptions — just physical cryptographic proof.

Resilient Identity: From AI-Resistant Profiles to Hardware-Backed Sovereignty

As generative AI evolves, the line between real and synthetic identities continues to blur. In this age of digital impersonation, resilient identity isn’t just about proving who you are — it’s about proving who you are not.

Why Traditional Identity Checks Fail

- Biometric spoofing: Deepfake engines now bypass facial and voice recognition systems.

- Document forgery: AI-powered scripts auto-generate fake ID cards, passports, and licenses.

- Credential stuffing: Even MFA can be bypassed using session tokens stolen by infostealers.

PassCypher NFC HSM: Enforcing Digital Authenticity at the Hardware Layer

PassCypher NFC HSM devices (Lite or Master editions) enforce identity verification using tamper-proof, air-gapped NFC modules. Each action — login, message decryption, or key sharing — requires physical presence and device trust pairing. In contrast to centralized identity providers, PassCypher works offline, eliminates impersonation risks, and gives users full control of authentication without disclosing biometric or personal data.

Strategic Takeaway

Resilient identity isn’t verified in the cloud — it’s sealed in hardware you control. As threat actors use AI to clone users, organizations must adopt cryptographic proof-of-personhood that cannot be simulated, spoofed, or replicated.

The Future of Authentication: Biometrics, AI and Their Limitations

As threats grow more sophisticated, the push toward biometric and AI-assisted identity verification systems is accelerating. From fingerprint readers to facial recognition and voice authentication, the world is transitioning toward “who you are” rather than “what you know.” But while biometrics offer convenience, they are not immune to compromise.

AI Can Fake You

Deepfake technologies now allow attackers to replicate biometric features using stolen media — including voice samples, images, and videos. In some cases, AI-generated fingerprints have been used to bypass sensor-based authentication systems. AI is no longer just a tool for defense. It’s a weapon in the arsenal of identity theft.

Biometrics = Permanent Risk

Unlike passwords, you can’t change your fingerprint or retina scan after a data breach. If a biometric identifier is stolen, it’s compromised forever — and the attacker can reuse it globally. That makes biometrics **inherently non-revocable**, raising legal and operational risks for long-term security strategies.

Offline Hardware vs. AI-Based Spoofing

PassCypher NFC HSM offers a radically different model: it keeps authentication completely offline and shields your identity from any AI-based spoofing attempt.

- It stores all cryptographic keys offline.

- It performs authentication locally via NFC or QR code.

- It avoids storing, transmitting, or requiring any biometric data — ever.

>Strategic Insight: The future of secure identity is not more AI — it’s less exposure. Air-gapped hardware offers what AI cannot: trust-by-design, not trust-by-illusion.

💡 For journalists, executives, developers and activists, staying under the radar may mean staying out of the biometric web entirely.

Credential leaks don’t just enable fraud — they serve as a gateway for **corporate espionage**. Stolen sessions from executives, developers, or sysadmins can offer deep access to intellectual property, internal tools, and strategic documents.Today’s digital identity is not just personal — it’s **privileged**.

Session Hijack = Invisible Espionage

A hijacked session token grants immediate access to internal dashboards, file repositories, and business communications — **without triggering login alerts**.

This makes session theft the preferred tactic for stealthy reconnaissance and sabotage.

</ux_text]

From Source Code to Insider IP Theft

When credentials from platforms like GitHub, Jira, Confluence or Slack are leaked, attackers can:

- Read source code and introduce backdoors

- Monitor R&D pipelines in stealth mode

- Access procurement and negotiation files

- Sabotage infrastructure (e.g., deleting repositories or staging ransomware)

Case in Point: Silent Access, Maximum Damage

In 2024, multiple leaks led to exfiltration of sensitive data from aerospace, energy, and pharmaceutical sectors — not via malware, but through legitimate session reuse by unauthorized actors. By the time anomalies were noticed, the attackers had already left.

> Strategic Insight: The greatest threat is not breach but invisibility. Session hijacks allow adversaries to operate as if they were insiders — with zero friction.

Advanced persistent threats don’t hack your system. They **borrow your login** — and act as if they built it.

The 2025 identity leak doesn’t just raise cybersecurity concerns — it triggers **legal and compliance minefields**. Organizations impacted by session hijacks and credential resale now face scrutiny under global data protection frameworks.

GDPR, NIS2, and Beyond

Stolen sessions qualify as **personal data breaches**. Under laws like:

- GDPR (EU): Companies must report identity-based breaches within 72 hours.

- NIS2 (EU): Operators of essential services face stricter security obligations.

- CCPA (California): Failure to secure digital identity data can trigger lawsuits.

Failure to comply may result in **multi-million euro penalties** and mandatory audits.

Employer Liability: A Growing Vector

When attackers hijack an employee’s session to commit fraud or espionage, they shift the legal burden onto the company — forcing it to assume responsibility for:

- Failure to implement sufficient identity protection

- Negligence in breach containment

- Insufficient logging and detection

This risk is especially high for sectors with high-value intellectual property (finance, pharma, aerospace).

Compliance Requires More Than Policy

Legal experts now recommend:

- Hardware-based identity proofing for high-privilege roles

- Real-time session traceability with hardware tokens

- Decentralized identity management — to reduce cloud trust exposure

Strategic Insight: Laws were built around passwords and systems. The future of compliance is built around sessions and people.

The next compliance wave isn’t about passwords. It’s about proving you can detect, revoke, and replace stolen digital identities.

Final Strategic Insight – A New Identity Paradigm

The Fortinet mega-leak is not just another breach — it’s a **paradigm shift in the mechanics of digital trust**. We no longer face isolated password leaks. We face the full industrialization of identity emulation, driven by real-time session resale, hardware fingerprinting, and AI-powered impersonation. This demands a new model.

Decentralization + Hardware + Anonymity

The future of identity protection starts when users reclaim control. We must move identity offline, anchor it in tamper-proof hardware, and decentralize it entirely. In this model, users don’t just get “authenticated” — they carry their own cryptographic shield by default. This model:

- Rejects dependence on cloud trust or biometric central servers

- Prevents identity theft at the root: session-level interception

- Empowers sovereign control of credentials and private keys

From Defense to Deterrence

Legacy MFA and password managers cannot scale against AI-enhanced identity fraud. Instead, a shift is needed:

- From credential storage to session immunity

- From cloud-based authentication to air-gapped, tamper-proof hardware

- From password rotation to identity isolation by design

Users must adopt hardware-segmented identity as the only viable long-term strategy — one they control directly, one that remains invisible to malware, and one that even AI cannot forge.

Rebuilding Digital Trust in the Age of AI-Driven Identity Fraud

The leak of over 16 billion valid credentials doesn’t just reveal the failure of perimeter defenses — it confirms something deeper: the collapse of implicit digital trust.

Today, cybercriminals exploit generative AI to synthesize voices, faces, and deepfake videos in real time, using nothing more than data stolen from infostealer logs. In this new reality, a password no longer proves identity. A token means little. Even a voice over the phone could be fake.

To counter this, we must shift the burden of proof back to the individual. Only the user — physically present, cryptographically segmented, and offline — can serve as the unforgeable anchor of trust.

Solutions like PassCypher HSM PGP and PassCypher NFC HSM already operate on this principle. They transform users from the weakest link into the root of trust, removing the need to delegate authentication to vulnerable digital infrastructure.

But technology alone isn’t enough. This transformation begins by radically shifting our mindset: we must stop hosting identity in the cloud, syncing it across devices, or delegating it to third parties — and instead, start making it personal, portable, and verifiable by design.

Until we embrace this model, even the most complex credentials remain exploitable.

Now is not the time to apply security patches. Now is the time to reinvent authentication from the ground up.